Against Special Casing

AI and a Future for Open-Ended Software

November 14, 2024

Part 1: The Mind and Body

When we think of the mind, we think of the human mind. When we think of the body, we think of the human body. We struggle to project ourselves into the experience of another mammal such as a bat1, let alone experiential modes even more distinct from our own.

Our understanding of intelligence, of what constitutes an intelligent being, and even what defines an individual, are all deeply rooted in our knowledge of ourselves and our close animal relatives.

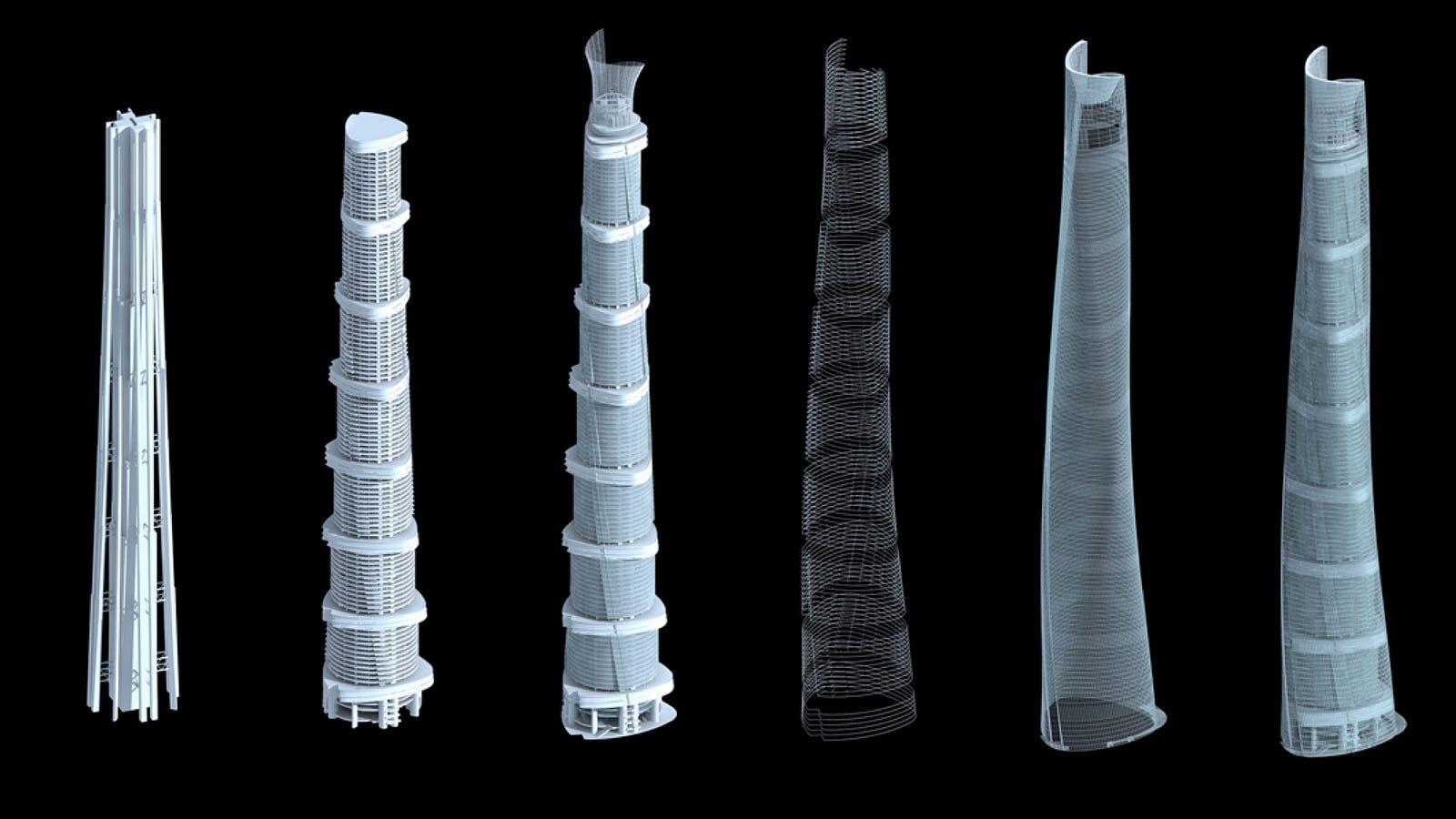

The human brain is just one of many network architectures that occur in nature. The lifespan, procreative nature, and tissue-regenerative capacities of the human are also representative of a narrow corridor of life on this planet.

Our human-centric mental model for the notions of mind and body forms pervasive analogies that in turn guide the design of our technological systems - AI models (minds) and their corresponding interfaces (bodies).

These analogies will serve only to limit software’s evolution.

While the comparison of AI to the human mind has its own drawbacks, I am here concerned with the body analogy.

Rather than vertebrate minds and bodies, we should take inspiration from other forms of life, such as octopi, or better yet, fungi, which offer alternative models of intelligence and adaptability.

In Entangled Life, Merlin Sheldrake notes that “animals put food in their bodies, whereas fungi put their bodies in the food.”2

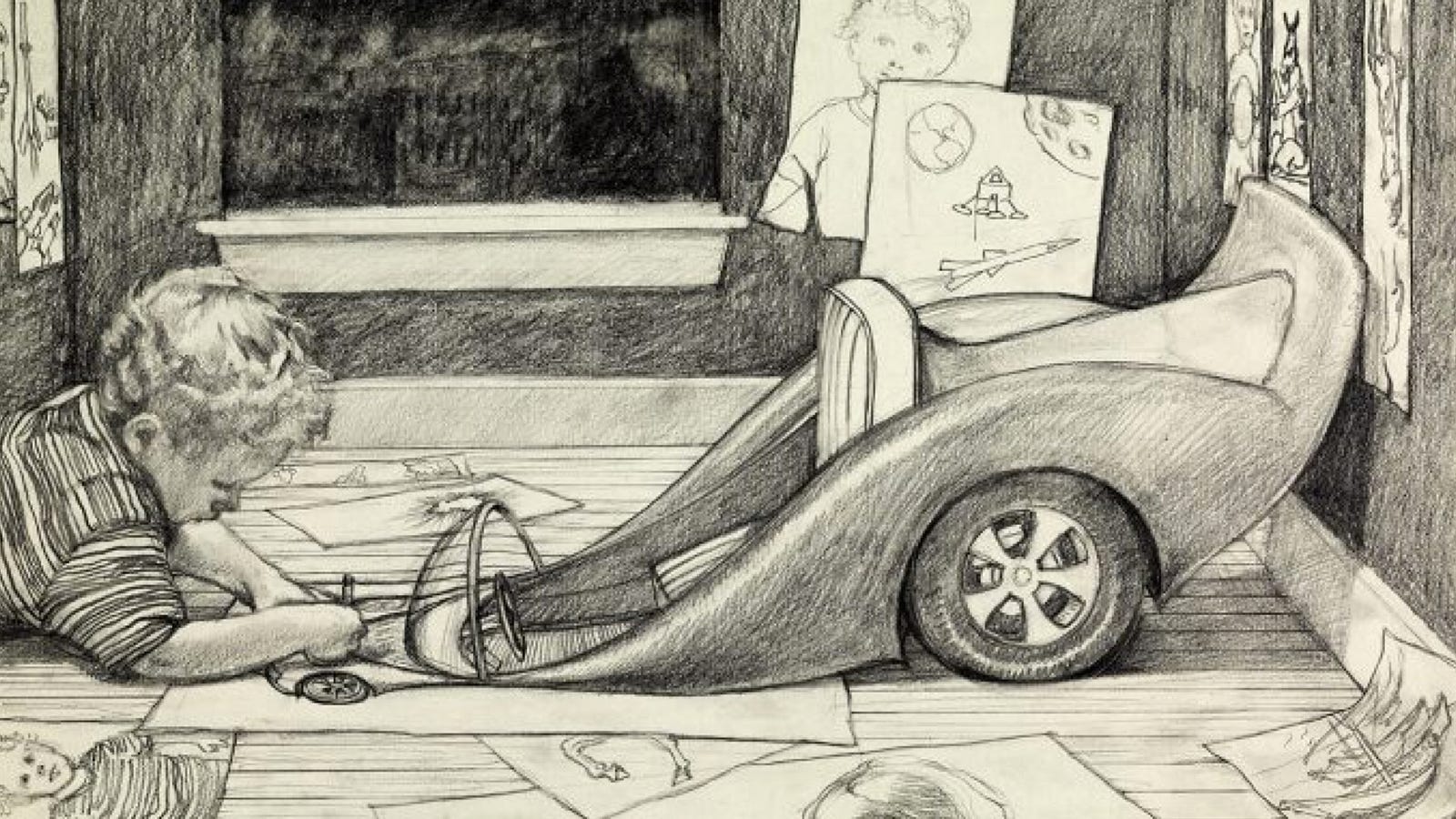

We have long been dealing with animals. Word, Photoshop and Maya are all vertebrates. They have rigidly-defined bodies: workspaces, panels and menus. They move documents and data through well-defined pathways and organs. The individual is not adaptive, or is minimally so. Change instead occurs over generations of the software.

Species that wrap themselves around their food, that become enmeshed in it; species built of largely undifferentiated material that can be spun into special purpose material (such as a mushroom) when the time is right - these are biological analogies that will lead software into a new age.

Part 2:

Computers in the 1980s and 90s, when user interfaces first came to life, were quite impoverished by today’s standards. Software developers often resorted to hardware-specific optimizations that could make all the difference for shipping but did not generalize or age very well.

Graphics functionality including user interface rendering was often the focus of these heroic programming efforts due to inherent conflict between the computational complexity of graphics calculations and the need for real-time interactivity.

Under these conditions, it was a miracle to ship anything at all and certainly no one had the luxury to concern themselves with the ways in which statically defined software and interfaces would limit the user.

...

1 Nagel, Thomas. "What Is It Like to Be a Bat?" The Philosophical Review, vol. 83, no. 4, Duke University Press, Oct. 1974, pp. 435-450.

2 Sheldrake, Merlin. Entangled Life: How Fungi Make Our Worlds, Change Our Minds & Shape Our Futures. Random House, 2021, p. 51.